Background

Collection must be the most commonly used data model in programming. And there are a number of data structure that represents collection in memory, in which Array and Liked List must be the most well known ones.

When dealing with collection, filtering must be one of the most widely used operation being used. Such as find item(s) satisfies specific criteria from the collection. Although filtering is used a lot, but it isn’t actually a simple or easy operation to apply, especially dealing with tremendous data volume.

In fact, there are a number of derived topics about collection filtering, which is impossible to cover them all in the article. This article will focus on bit operation, probably a not widely known but really interesting topic to introduce.

Problem: Find the missing integer

Problem

Suppose there is a collection of integersL.

Name the collection that contains integers from 1 toNasG

ThenLcontains all the elements ofGexcept one numberx.

Items inLare in random order.

Findx.

TIP: This problem be too easy to experienced developers and algorithm hackers. But it is a good opening words to the following 2 problems in this series. Also it reveals the core concept of some other advanced technologies, such as CRC or bloom filter.

When got this problem, the very intuitive(means do it manually by human being) idea is to compare the elements in L and G, find each element in L and remove it from G, then the only left item in G is x.

Here is the code:

|

|

Well this is a work but brute algorithm, which is not efficient in both time and space:

- Instantiate

Gmeans it has space complexityN - Find and remove

ifromGmeans it has time complexity atO(N*Log(N))

Yes, the algorithm can be optimized, with bit operation. In fact the problem can be resolved with time complexity O(N) and space complexity O(1).

Exclusive-Or Operation

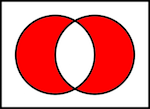

Exclusive-Or(Or XOR for short, written as ^ in this article) is a basic bit operation, here is an Venn Diagram explains it:

XOR is an important bit operation because it has following interesting features:

- A ^ B = B ^ A (aka Commutativity)

- (A ^ B) ^ C = A ^ (B ^ C) (aka Associativity)

- A ^ A = 0

- A ^ 0 = A

Commutativity and Associativity ensure that XOR can be used despite of order. The 3rd and 4th feature establishes a critical position for XOR in cryptology and encoding.

Solution: with XOR

By making use of XOR, previous problem can ben resolved in a much more graceful way:

- Given

yis thexorresult of all items inG - And

zis thexorresult of all items inL - Then

x = y ^ z

|

|

Let’s prove it mathematically:

Actually the code can be more concise by using reduce, with 2-lines of code

|

|

Or C version:

Extending problems

So far the problem has been gracefully resolved. But it is yet the end, here is a couple of extending questions:

- If

Gis collection with random but unique numbers (Not continues integer from 1 toN). How to write the code? - Based on

1, if there are 2 numbers are missing? How to find them both? - Based on

1, butLcontains the members fromGexceptxfor exactly 2 times. That meansLmight contain 0 or 1x, but exactly 2 for any other members fromG. How to findx? - Based on

1, butGisn’t a collection of integer, but a collection of web urls. How to find the one missing url? - Based on

4,Gis a collection of url,Lis subset ofG(might contains fewer members other than exactlyN- 1). How can we know a randomly picked member fromGis absolutely not contained byL. Given the tolerance to mistake on saying a member fromGis inLbut it doesn’t.

These problems will be explained in the following posts.